MPAIL2: Planning from Observation and Interaction

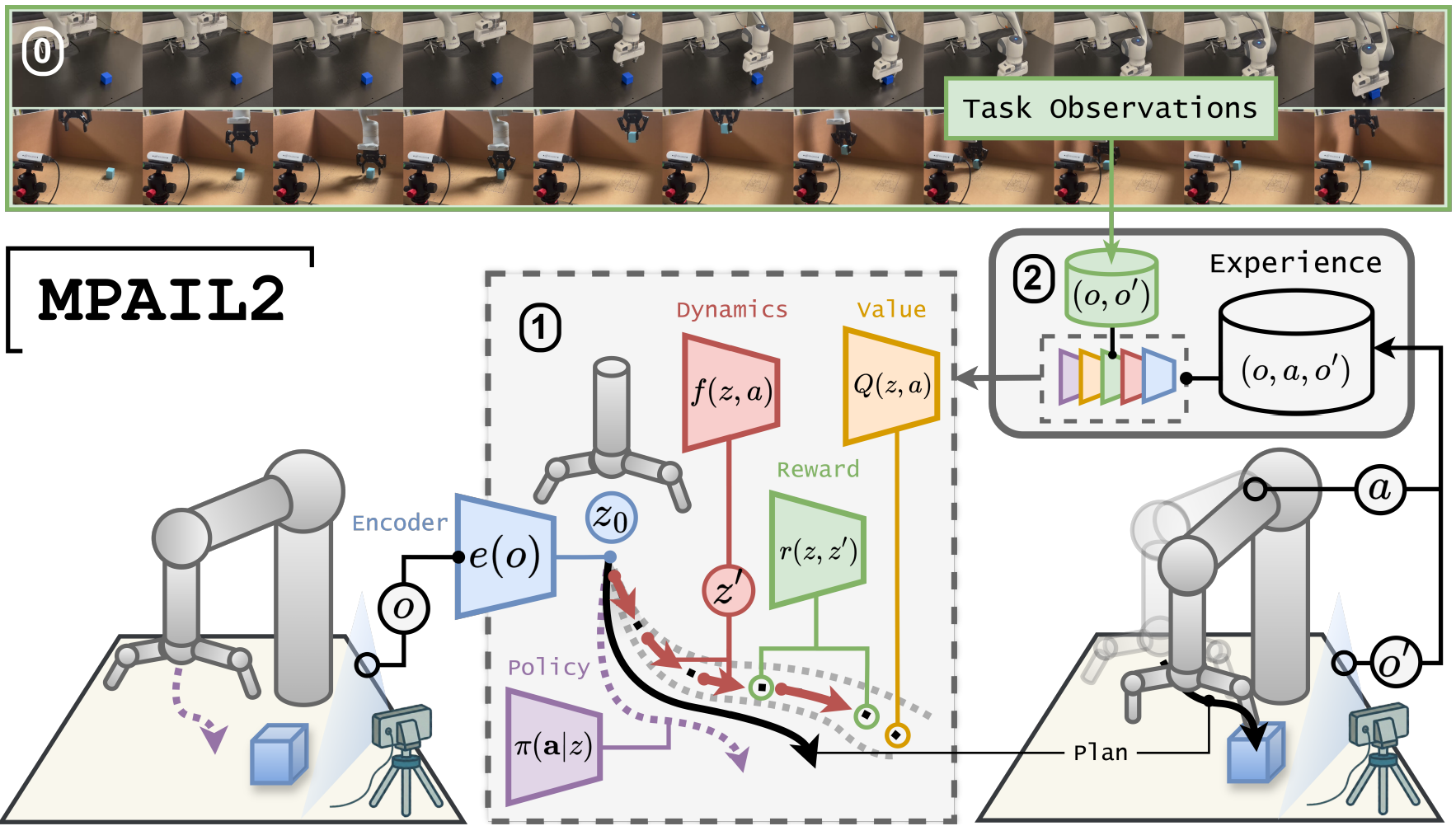

Few days ago, our team at the Robot Learning Lab @ UW, led by Tyler Han, released a paper titled “Planning from Observation and Interaction”. In this work, we propose Model Predictive Adversarial Imitation Learning 2 (MPAIL2), an algorithm for Inverse Reinforcement Learning from Observation (IRLfO).

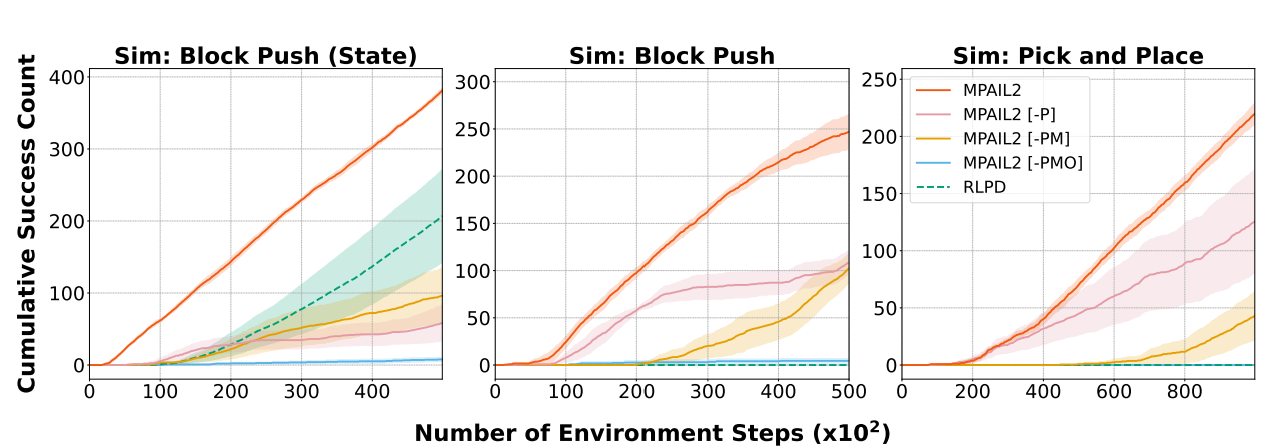

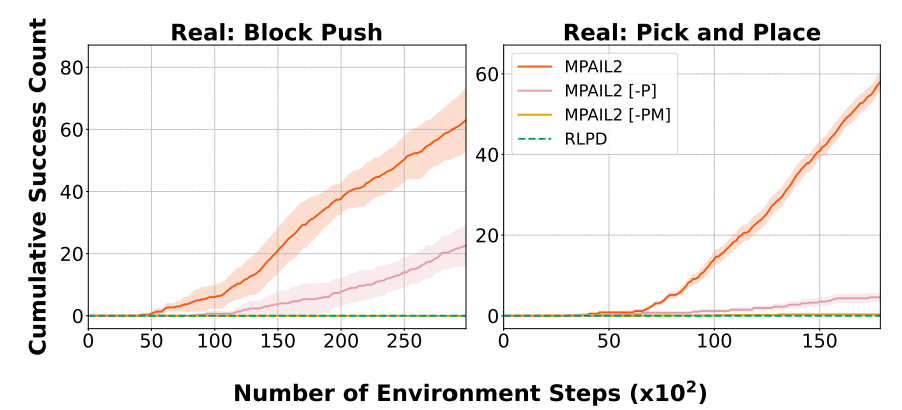

To the best of our knowledge, MPAIL2 is the first IRLfO system demonstrated on a real robot. In our experiments, MPAIL2 learns new manipulation tasks in under an hour — without assuming a known reward function, without action labels in the expert data, and without any pre-training. Beyond showing that real-world IRLfO is possible, MPAIL2 is significantly more sample efficient than state-of-the-art RL and IRL baselines.

MPAIL2 combines world modeling with off-policy adversarial reward learning and deploys the agent through online planning. Instead of learning just a policy, the agent learns an encoder, dynamics model, reward function, value function, and a multi-step policy. Together, these components allow the robot to model causal structure — such as physical interactions and contact — and plan over predicted future states.

The world model (encoder + dynamics) learns latent dynamics directly from images. The reward function captures what the expert prefers from observation-only demonstrations. The value function estimates long-term return under the learned reward, and the policy maximizes predicted multi-step returns.

The result is a major improvement in sample efficiency. While baseline methods such as RLPD and DAC fail to achieve success after more than an hour of real-world training, MPAIL2 consistently solves image-based pushing and pick-and-place tasks in 40 minutes or less.

Importantly, MPAIL2 is a world model — which enables transfer. In our transfer experiments, robots trained on one task were exposed to a new set of task observations. MPAIL2 adapted to the new task roughly twice as fast as training from scratch, while other baselines either failed to transfer or learned significantly more slowly.

Beyond improvements in training efficiency and transferability, MPAIL2’s planning-based structure naturally supports interpretability, steerability, and safety. By explicitly predicting and evaluating future trajectories, the system provides a more practical and transparent path forward for real-world reinforcement learning.

Full details and more demos are available in the paper and project resources below: